Continuing with the theme of my last DW comment – ‘everything is new, yet everything remains the same’ (to paraphrase the rather more elegant French original) – this issue of DW includes a major article on Immersed Computing. The idea of liquid cooling has been around for quite some time, but has never really gained major traction. I suspect that this is almost entirely down to the fact that the combination of liquids, electricity and IT hardware scares most of us. Simplistically, I suspect we have all dropped, or know of someone who has dropped, a mobile phone/tablet/iPad etc. into the bath/down the toilet etc. and we know that the drenched item never quite works the same again, if at all!

So, no matter how elegant, sensible and trustworthy liquid cooling might sound, there’s always that fear that, if something does go wrong, it’s not going to sounds too clever if the boss discovers that the data centre failed because of some kind of liquid leak or flood. No matter that the cooling technologies that are used in the data centre owe more to the ancient Greeks than to any major 21st century technology breakthrough.

However, as the quest for digital transformation gains momentum, it’s becoming increasingly obvious that the folks that are embracing this objective most successfully are those that are prepared to challenge the received wisdom of generations of IT and data centre specialists. It might not be necessary to start with a completely blank piece of paper, but it’s certainly a good idea to ensure that your sheet has a margin where you can park all the tried and trusted ideas, and bring them in where it does not make sense to embrace some of the more recent ideas. And I say ideas, because most, if not all, of the technologies these ideas are based upon have been around for quite a while. What’s changed is that, where once these technologies were slow and inefficient, many of them are now reaching a level of maturity and reliability that makes them viable for use in the world of IT.

Liquid cooling (along with Cloud, virtualisation, the edge, SSDs, IoT…) might not be for everyone, but you’d be foolish to ignore the claims of the technology without examining its potential. If I’ve understood it correctly, not only does it keep your IT hardware nice and comfortable, there’s also the possibility for using the waste heat from the process. Scandinavia and other parts of mainland Europe in particular seem to like the idea of re-purposing waste energy and, while it doesn’t always make financial sense, it’s just another ‘new’ idea to consider.

Of course, all of the new ideas and technologies currently being promoted across the IT universe only make true sense if all parts of the business work together. So, if you want to become a truly digital business, it’s time to knock down those silos, tear down the divides between departments and make sure that everyone understands what’s available and what it can do for the overall business. Yep, you need to establish a working group that includes data centres, IT, sales, marketing, finance, compliance, HR and more. Not easy, but pretty much essential to ensure that the impact of any idea under consideration is fully understood by all.

New research reveals 80 percent of companies at risk of being left behind as most digital innovation projects fail to meet expectations.

Despite spending millions of dollars on digital transformation in the past year, enterprises still feel they are at significant risk of being left behind by their industries, research from Couchbase

shows. In the survey of 450 heads of digital transformation for enterprises across the U.S., U.K., France, and Germany, 80 percent are at risk of being left behind by digital transformation while 54 percent believe organizations that don’t keep up with digital transformation will go out of business or be absorbed by a competitor within four years. And IT leaders are also at risk, with 73 percent believing they could be fired as the result of a poorly implemented or failing digital project.

Other findings include:

“Our study puts a spotlight on the harsh reality that despite allocating millions of dollars towards digital transformation projects, most companies are only seeing marginal returns and realizing this trajectory won’t enable them to compete effectively in the future,” said Matt Cain, CEO of Couchbase. “With 87 percent of IT leaders concerned that their revenue will drop if they don’t significantly improve their customers’ experiences, it’s critical that they focus on projects designed to increase customer engagement. Key to succeeding here is selecting the right underlying database technology that can leverage dynamic data to its full potential across any platform and deliver the personal, highly responsive experiences that customers are demanding today.”

Ninety percent of IT leaders said their plans to use data for new digital services were limited by factors such as the complexity of using multiple technologies or a lack of resources, as well as reliance on legacy database technology.

Survey respondents identified specific issues with legacy databases that could lead to digital projects underperforming:

"Historically, some enterprises haven’t done well at using data to improve customer experience, which is why digitally native companies have made some giant inroads in traditionally brick & mortar businesses,” said John A. De Goes, CTO of SlamData Inc. “If all enterprises want to thrive, they need the confidence, ability, and technology to reinvigorate the customer experience. They need a revolution in the way they use data, to transform the customer experience and provide a data-driven way of truly engaging with end-users."

Findings from CITO Research and Commvault reveal that while business leaders are rapidly embracing the cloud, 81 percent are very concerned about missing out on new cloud advancements.

Commvault has published the results of a new executive survey that found that 81 percent of C-level and other IT leaders are either extremely concerned or very concerned about missing out on cloud advancements. The survey, which was conducted in partnership with IT research firm CITO Research, demonstrates that CEOs, CIOs and CTOs are experiencing serious cloud Fear of Missing Out (FOMO).Alert Logic has published the results of a comprehensive research, “Cybersecurity Trends 2017 Spotlight Report,” that explores the latest cybersecurity trends and organisational investment priorities among companies in the UK, Benelux and Nordics.

Conducted amongst 317 security professionals, the survey indicates that while cloud adoption is on the rise, the top concern is how to secure data in the cloud and protect against data loss (48 per cent). The next two biggest priorities for security professionals were threats to data privacy (43 per cent) and regulatory compliance (39 per cent).

The study also examined the top constraints faced by these organisations in securing cloud computing infrastructures. The study found that organisations lack internal security resources and expertise to cope with the growing demands of protecting data, systems and applications against increasingly sophisticated threats (42 per cent). This is closely followed by a desire to reduce the cost of security (33 per cent), moving to continuous 24x7 security coverage (29 per cent), improving compliance (24 per cent) and increasing the speed of response to incidents (20 per cent).Public cloud platform providers like Amazon Web Services (AWS), Microsoft Azure and Google Cloud Platform offer many security measures, but organisations are ultimately responsible for securing their own data and the applications running on those cloud platforms.

According to Verizon’s recent security report, attacks on web applications are now the no. 1 source of data enterprise breaches, up 300 per cent since 2014. Similarly, the report found cybersecurity professionals – more than half of survey participants – to be most concerned about customer-facing web applications introducing security risk to their business (53 per cent). This is followed by mobile applications (48 per cent), desktop applications (33 per cent) and business applications such as ERP platforms (31 per cent). Application related breaches have negative consequences and can lead to revenue loss, significant recovery expense, and damaged reputation.

“Web applications are the most significant source of breaches for organisations leveraging cloud and cloud hybrid computing infrastructures,” said Oliver Pinson-Roxburgh, EMEA Director at Alert Logic. “They are complex, with a large attack surface that can be compromised at any layer of the application stack and often utilise open source and third-party development tools that can introduce vulnerabilities into an enterprise.”

Organisations can implement incentives to prevent gaps in the security policy of an application or to avoid vulnerabilities in the underlying system that are caused by flaws in the design, development, deployment, upgrade, maintenance or database of the application. Additionally, many businesses turn to cloud security vendors with a “products + services” strategy rather than technologies alone to fight web application attacks. Businesses increasingly find that a combination of cloud-native security tools provided in combination with 24x7 security monitoring by security and compliance experts is the best way to secure their sensitive data – and the sensitive data of their customers – in the cloud.“

A multi-layer web application attack defence is the cornerstone of any effective cloud security solution and strategy,” said Pinson-Roxburgh.

A new update of the Worldwide Semiannual Small and Medium Business Spending Guide from International Data Corporation (IDC) forecasts that total IT spending by small and medium-size businesses (SMBs) will approach $568 billion in 2017 and increase by more than $100 billion to exceed $676 billion in 2021. With a five-year compound annual growth rate (CAGR) of 4.5%, spending by businesses with fewer than 1,000 employees on IT hardware, software, and services, including business services, is expected to be slightly stronger than IDC's previous forecast.

"SMB IT spending growth continues to track about two percentage points higher than GDP growth across regions. But beneath that slowly rising tide are faster moving currents that reflect the changing ways SMBs are acquiring and deploying technology," said Ray Boggs, vice president, SMB Research at IDC.

SMBs around the world are increasingly interested in investing in resources to improve employee productivity and improve their competitive positions. Boggs noted that while SMBs, especially smaller ones, have immediate tactical needs to sharpen performance, they are also looking to coordinate resources in a meaningful way. For many this will be an important step towards Digital Transformation (DX).

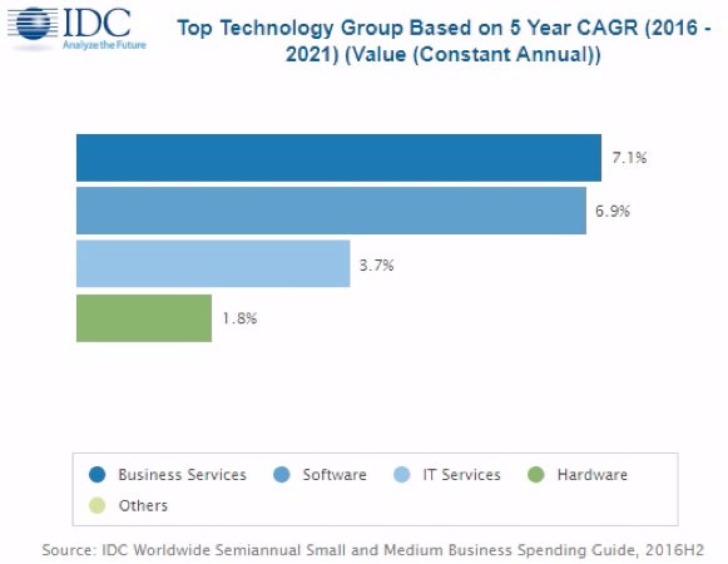

SMBs will spread their IT investments about equally across the three major categories – hardware, software, and IT services – with these categories accounting for more than 85% of total SMB technology spending worldwide. While hardware purchases currently represent the largest share of this spending, IDC expects 2019 to be the watershed year when software and IT services spending both surpass hardware spending. The smallest of the major categories – business services – will see the greatest spending growth of the four technology categories at 7.1% CAGR, followed closely by software (6.9% CAGR).

One third of all SMB software purchases in 2017 will be from the top 3 applications categories: enterprise resource management (ERM), customer relationship management (CRM), and content applications. Application development & deployment and system infrastructure software will also be key areas for SMB software investment. Hardware spending will be led by purchases of PCs and peripherals, which accounted for almost half of SMB hardware spending in 2016 (49.6%) a share that will decline throughout the forecast period to 43.3%. SMB services spending is divided between IT services and business services. While SMB spending on IT services will continue to be more than twice that of business services throughout the forecast period, business services' share is growing, with spending growth roughly twice that of IT services (7.1% vs. 3.7% CAGR).

Medium-sized businesses (100-499 employees) will be the largest market throughout the 2016-2021 forecast with 38% of worldwide SMB IT products and services revenues coming from this group of companies. The remaining revenues will be generated about equally by large businesses (500-999 employees) and small businesses/small offices (1-99 employees). Medium and large firms will also experience the strongest spending growth with CAGRs of 4.6% and 4.5% respectively, slightly above small business spending growth of 4.4% The SMB opportunity for both near-term and long-term IT spending growth extends across all company size and technology categories.

"The Western European SMB market is big and growing even if European SMBs traditionally show a lower level of IT sophistication than their bigger counterparts and therefore they can represent a difficult target market. In this context we see today the rise of SMBs that were born in the digital era, that are very innovative and attracted by 3rd Platform and Innovation Accelerators (particularly cloud, mobility, and IoT). Even if these companies represent only a small percentage of the overall SMB market, they can set the scene and pave the way to a broader adoption of innovative IT solutions," said Angela Vacca , senior research Manager, Customer Insights & Analysis

.

The market for governance, risk and compliance (GRC) software is expected to experience strong growth as business leaders look for solutions to meet the challenges of regulatory change, cybersecurity threats, third-party exposure, and reputation risk. In the first forecast to size the overall GRC software market, International Data Corporation (IDC) sees worldwide revenues reaching $11.8 billion in 2021, growing at a compound annual rate of 6.7% over the 2016-2021 forecast period.

A number of factors are driving the growth in demand for GRC applications. Regulatory compliance has become increasingly complex and corporate governance, risk and compliance initiatives have come under greater scrutiny. Given the financial and reputational impact of high visibility compliance and security breaches, risk management has become a strategic level conversation, discussed among the C-suite and corporate board. At the same time, risk management responsibilities have started to shift downward toward the line of business owner as the first line of defense, making user engagement, ease of use, and integration with other enterprise applications just as important as reporting.

"Successful GRC vendors are developing more intuitive and configurable platforms, providing expanded integration and content options, and focusing on user engagement through automated reporting, alerting, and mobile accessibility," said Angela Gelnaw, senior research analyst, Legal, Risk & Compliance Solutions.

Another important factor driving growth in the GRC market is the rise of cloud solutions, which are growing faster than the overall market. Adoption of GRC applications among small and medium-sized businesses, line of business managers, and less-regulated industries have been central to the growth of these cloud-based solutions.

IDC defines governance, risk and compliance software as the aggregation of the tools required to help an enterprise identify, track, and analyze enterprise and technology risks and to monitor and manage corporate and IT governance and compliance initiatives to enhance performance and stay in compliance with global laws and regulations, industry standards, and company policies. IDC recognizes five segments in the GRC software market: GRC Integrated Suites, Corporate Governance & Compliance Management applications, Enterprise Risk Management applications, Audit Management solutions, and Business Resiliency applications. The GRC Integrated Suites segment comprises about 20% of the overall market and is expected to experience healthy growth throughout the forecast. The rest of the GRC market is fragmented across the other four segments.

Worldwide revenues for the augmented reality and virtual reality (AR/VR) market are forecast to increase by 100% or more over each of the next four years, according to the latest update to the Worldwide Semiannual Augmented and Virtual Reality Spending Guide from the International Data Corporation (IDC). Total spending on AR/VR products and services is expected to soar from $11.4 billion in 2017 to nearly $215 billion 2021, achieving a compound annual growth rate (CAGR) of 113.2% along the way.

The United States will be the region with the largest AR/VR spending total in 2017 ($3.2 billion), followed by Asia/Pacific (excluding Japan)(APeJ) ($3.0 billion) and Western Europe ($2.0 billion). But then things get interesting as APeJ jumps ahead of the U.S. in total spending for two years before its growth rate starts to slow in 2019. The U.S. then pushes back into the top position in 2020 driven by accelerating growth in the latter years of the forecast. Meanwhile, Western Europe is expected to overtake APeJ for the number 2 position in 2021. The regions that will experience the fastest growth over the 2016-2021 forecast period are Canada (145.2% CAGR), Central and Eastern Europe (133.5% CAGR), Western Europe (121.2% CAGR) and the United States (120.5% CAGR).

Within the regions, the industry segments driving AR/VR spending start from roughly the same place, but then evolve quite differently over time. The consumer segment will be the largest source of AR/VR revenues in each region in 2017. In the United States and Western Europe, the next largest segments are discrete manufacturing and process manufacturing. In contrast, the next largest segments in APeJ in 2017 are retail and education. Over the course of the forecast, the consumer segment in the U.S. will be overtaken by process manufacturing, government, discrete manufacturing, retail, construction, transportation, and professional services. In APeJ, the consumer segment will remain the largest area of spending throughout the forecast, followed by education, retail, transportation, and healthcare in 2021. Consumer spending will also lead the way in Western Europe, with discrete manufacturing, retail, and process manufacturing showing strong growth by the end of the forecast.

"The consumer, retail, and manufacturing segments will be the early leaders in AR & VR investment and adoption. However, as we see in the regions, other segments like government, transportation, and education will utilize the transformative capabilities of these technologies," said Marcus Torchia, research director of IDC Customer Insights & Analysis. "With use cases that span both AR & VR environments, we see a significant opportunity for companies to re-cast how users interact in business processes and everyday tasks."

"Augmented and virtual reality are gaining traction in commercial settings and we expect this trend will continue to accelerate," said Tom Mainelli, program vice president, Devices and AR/VR at IDC. "As next-generation hardware begins to appear, industry verticals will be among the first to embrace it. They will be utilizing cutting-edge software and services to do everything from increase worker productivity and safety to entice customers with customized, jaw-dropping experiences."

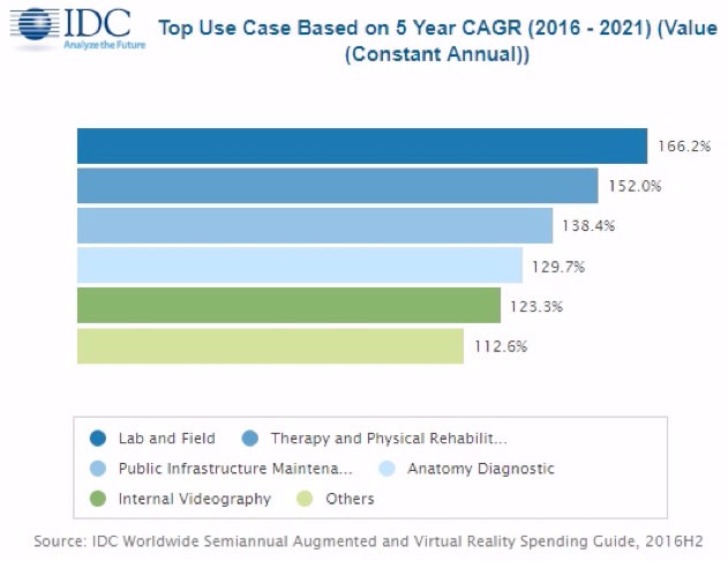

The industry use cases that will attract the largest AR/VR investments are also expected to evolve over the five-year forecast. In 2017, the largest industry use cases will be retail showcasing ($442 million), on-site assembly and safety ($362 million), and process manufacturing training ($309 million). By the end of the forecast, the largest industry use cases in terms will be industrial maintenance ($5.2 billion) and public infrastructure maintenance ($3.6 billion), followed by retail showcasing ($3.2 billion). In contrast, the consumer segment will be dominated by AR and VR games throughout the forecast, with total spending reaching $9.5 billion in 2021. The use cases that will see the fastest growth over the forecast period are lab & field (166.2% CAGR), therapy and physical rehabilitation (152.0% CAGR), and public infrastructure maintenance (138.4% CAGR).

Spending on VR systems, including viewers, software, consulting services, and systems integration services, are forecast to be greater than AR-related spending in 2017 and 2018, largely due to consumer uptake of hardware, games, and paid content. After 2018, AR spending will surge ahead as industries make significant purchases of AR software and viewers.

Achieving broad competence in event-driven IT will be a top three priority for the majority of global enterprise CIOs by 2020, according to Gartner, Inc. Defining an event-centric digital business strategy will be key to delivering on the growth agenda that many CEOs see as their highest business priority.

"Event-driven architecture (EDA) is a key technology approach to delivering this goal," said Anne Thomas, vice president and distinguished analyst at Gartner. "Digital business demands a rapid response to events. Organizations must be able to respond to and take advantage of 'business moments' and these real-time requirements are driving CIOs to make their application software more event-driven."

Because CEOs are focused on growth via digital business, CIOs should focus on defining an event-centric digital business strategy and articulate the business value of EDA. According to the Gartner 2017 CEO survey, 58 percent of CEOs see growth as their highest business priority. CEOs achieve growth by adopting new business models, introducing new products and services, expanding into new markets and geographies, upselling to existing customers and stealing market share from competitors.

"Findings from the survey clearly indicate that CEOs view digital business as their No. 1 opportunity for growth," said Ms. Thomas. "Most CEOs also recognize a triangular relationship between technology, product improvement and growth. They recognize that technology is the fundamental enabler of digital transformation and leading digital companies have figured out that EDA is the 'secret sauce' that gives them a competitive edge."

Event-centric processing is the native architecture for digital business, and to enable growth through digital business, strategic parts of the application portfolio will need to become event-driven. CIOs can use EDA to foster growth by enabling digital business transformation, capitalizing on digital business moments, using modern technologies, accelerating business agility and enabling application modernization.

"Event processing and analytics play a significant role in allowing organizations to capitalize on a business moment," said Ms. Thomas. "A convergence of events generates a business opportunity, and real-time analytics of those events, as well as current data and wider context data, can be used to influence a decision and generate a successful business outcome. But you can't capitalize on the business moment if you don't first recognize the convergence of events and the digital business opportunity."

This is why digital business is so dependent on EDA. The events generated by systems — customers, things and artificial intelligence (AI) — must be digitized so that they can be recognized and processed in real time. EDA will become an essential skill in supporting the transformation by 2018, meaning that application architecture and development teams must develop EDA competency now to prepare for next year's needs. CIOs should identify current projects where EDA can provide the most value to enable adoption of technology innovations such as microservices, the Internet of Things (IoT), AI, machine learning, blockchain and smart contracts.

A legacy application portfolio can be a significant inhibitor to digital business transformation. A digital business technology foundation must support continuous availability, massive scalability, automatic recovery and dynamic extensibility. Digital business applications must also use modern technologies to engage customers, support digital business ecosystems, capitalize on digital moments, and exploit AI and the IoT.

"Modernizing core application systems takes time, and few organizations are in a position to immediately move over to a replacement system," said Ms. Thomas. "Instead, they need to use EDA to stage their modernization efforts, and gradually migrate capabilities while implementing digital transformation."

Market hype and growing interest in artificial intelligence (AI) are pushing established software vendors to introduce AI into their product strategy, creating considerable confusion in the process, according to Gartner, Inc. Analysts predict that by 2020, AI technologies will be virtually pervasive in almost every new software product and service.

In January 2016, the term "artificial intelligence" was not in the top 100 search terms on gartner.com. By May 2017, the term ranked at No. 7, indicating the popularity of the topic and interest from Gartner clients in understanding how AI can and should be used as part of their digital business strategy. Gartner predicts that by 2020, AI will be a top five investment priority for more than 30 percent of CIOs.

"As AI accelerates up the Hype Cycle, many software providers are looking to stake their claim in the biggest gold rush in recent years," said Jim Hare, research vice president at Gartner. "AI offers exciting possibilities, but unfortunately, most vendors are focused on the goal of simply building and marketing an AI-based product rather than first identifying needs, potential uses and the business value to customers."

AI refers to systems that change behaviors without being explicitly programmed, based on data collected, usage analysis and other observations. While there is a widely held fear that AI will replace humans, the reality is that today's AI and machine learning technologies can and do greatly augment human capabilities. Machines can actually do some things better and faster than humans, once trained; the combination of machines and humans can accomplish more together than separately.

To successfully exploit the AI opportunity, technology providers need to understand how to respond to three key issues:

1) Lack of differentiation is creating confusion and delaying purchase decisions

The huge increase in startups and established vendors all claiming to offer AI products without any real differentiation is confusing buyers. More than 1,000 vendors with applications and platforms describe themselves as AI vendors, or say they employ AI in their products.

Similar to greenwashing, in which companies exaggerate the environmental-friendliness of their products or practices for business benefit, many technology vendors are now "AI washing" by applying the AI label a little too indiscriminately, according to Gartner. This widespread use of "AI washing" is already having real consequences for investment in the technology.

To build trust with end-user organisations vendors should focus on building a collection of case studies with quantifiable results achieved using AI.

"Use the term 'AI' wisely in your sales and marketing materials," Mr. Hare said. "Be clear what differentiates your AI offering and what problem it solves."

2) Proven, less complex machine-learning capabilities can address many end-user needs

Advancements in AI, such as deep learning, are getting a lot of buzz but are obfuscating the value of more straightforward, proven approaches. Gartner recommends that vendors use the simplest approach that can do the job over cutting-edge AI techniques.

3) Organisations lack the skills to evaluate, build and deploy AI solutions

More than half the respondents to Gartner's 2017 AI development strategies survey* indicated that the lack of necessary staff skills was the top challenge to adopting AI in their organisation.

The survey found organisations are currently seeking AI solutions that can improve decision making and process automation. If they had a choice, most organisations would prefer to buy embedded or packaged AI solutions rather than trying to build a custom solution.

"Software vendors need to focus on offering solutions to business problems rather than just cutting-edge technology," said Mr. Hare. "Highlight how your AI solution helps address the skills shortage and how it can deliver value faster than trying to build a custom AI solution in-house."

Although some CEOs might recognise that companies such as Uber or Airbnb are disrupting the business world, many still maintain a wait-and-see attitude. This usually means that they only respond once the threat to their business has been identified. But in the case of digital disruption, this approach simply will not do. There will not be enough time for business owners to respond in a manner that minimises impacts to their company.

By Janelle Hill, VP Distinguished Analyst, Gartner.

The main problem associated with digital disruption is that it often exists outside of the organisation’s normal range of vision. Although CIOs and their business executives acknowledge the potential for digital disruption, they lack the necessary tools, techniques and criteria for identifying and assessing them.

Digital disruptions are more difficult to adapt to than earlier technology-triggered shifts as a result of their virtual nature. In the past, disruptions were typically caused by physical technologies such as PCs or ATMs. Digital disruptions, on the other hand, mostly exist in the virtual world. This makes them hard to detect until after the impact has been felt.

Fortunately, CIOs can lead an organisation to overcome the challenges of digital disruption and equip peers to recognise and deal with digital disruption.

There is a significant difference between real digital disruption and fads – which businesses must recognise. Examples of fads include Pokemon Go or Google Glass. They will incite lots of excitement but have limited impact. A real disruption will completely redefine the market’s needs and potentially cause a significant change in the industry. The introduction of the iPad, for instance, caused changes in application development, impacted revenue of desktop and notebook computer manufacturers, and even changed how humans interact with technology, with FaceTime as the first mobile conferencing application.

Enterprises looking to identify disruptors before it’s too late should set up a “sensing apparatus” to monitor external indicators. These indicators include shifting customer behaviour and consumer trends, as many disruptors originate in the consumer world.

Companies should also pay attention to where venture capitalists are investing and to disruptions in adjacent markets. The sensing apparatus will create a lot of information to handle, so look to data scientists who can be useful in uncovering insights.

Monitoring external industries is new territory for a CIO, but other members of the executive team will be better equipped for such an effort. Depending on the setup of the business, the CIO might look to partner with the CMO, CFO, VP of Supply Chain or the Head of R&D to gain a greater understanding of potential disruptors. In a business-to-business set up, disruption can happen in the supply chain or with the end customer, so it’s best to partner with both the CMO and VP of Supply Chain. For business-to-consumer companies, disruptions are most likely to happen in the customer segment, so the focus should be on the CMO.

CMOs can offer insight into customer and market behaviour. They will also be able to identify potential indicators and will probably have the staff with the skills to analyse the data. In return, CIOs can offer CMOs institutional knowledge about IT systems and why certain systems are set up the way they are to provide perspective on how a potential disruption challenges the status quo.

Once a disruption is identified, the organisation must figure out its response.

Learn more about CIO leadership and how to drive digital innovation to the core of your business at Gartner Symposium/ITxpo 2017, taking place 5-9 November in Barcelona.

DCS talks to Rolf Brink, Founder and CEO of Asperitas, about immersed computing and the tremendous potential value to data centres.

1. Please can you provide us with some background on the company – when/why formed and progress to date?

Asperitas was actually founded on May 2, 2014 by myself and Markus Mandemaker with a completely different focus. We intended to tackle a big problem in the marine industry, system integration on board ocean vessels. We wanted to create a micro cloud based infrastructure, but had to think of a way to create an affordable, lean and mean micro datacentre on board a ship. Think of a rolling ship, salt air, no IT staff and a lack of conditioned rooms. This is how we came up with the idea of immersion. We actually designed a small, mobile air-tight immersion system which would be cooled with seawater as part of the engine cooling circuit. However, just before we hit the button to start the manufacturing of the first prototype, we realised that all other aspects of immersion, combined with our focus on integrating technologies were a lot more interesting and important for a completely different industry which we were more familiar with; datacentres. This was in April 2015. In the following 2 years, we spent our efforts to develop an immersion solution which was suitable for normal datacentre environments to allow for more sustainability, flexibility and efficiency. We launched the result of this development in March this year and ever since we have been in the spotlight for datacentre efficiency and innovation and our pipeline is gradually filling up.

2. And who are the key personnel involved?

We started with just Markus and myself. We realised that to invent something which is compatible with datacentres, we had to get the right people involved. Since this can be quite challenging, we rely on partners for a lot of the knowledge and expertise we require. In order to allow a fast, high quality scale up with a limited risk, we built an ecosystem of development and delivery partners with the right knowledge and expertise.

This approach has the result that we can remain relatively small ourselves. Our key staff is focused on managing the consortium of partners, market development and delivery management. This is where Maikel Bouricius (Marketing), Leon Lips (Sales) and Els Knijff (Operations) are playing the most important roles.

3. Please can you summarise the Asperitas ‘immersed computing’ proposition?

Asperitas’ Immersed Computing is an integrated approach to IT immersion and the first of its kind that makes immersion viable for datacentres.

The key issue we had to solve when we started developing was the usability aspect. Immersion cooling already exists and is positioned in a niche part of high performance computing. It was actually already patented in the late 60’s by a small US based company called “International Business Machines”. You may have heard of them…

There are however significant challenges when it comes to the practical use, which are sometimes of a lower priority in the HPC industry. This is why we were not so much focused on researching immersion itself, after all, it is already a proven technology. Instead, we were much more interested in addressing the practical implications of adopting immersion. We did extensive research into the reasons why immersion would not break through in the cloud industry, where density challenges are constantly growing. Each reason became an instant design requirement. This has become the foundation of “Immersed Computing”, as opposed to cooling.

Immersed Computing addresses the entire way of work. It focuses on the integration of technologies, handling, tooling, efficiency and vision related to immersion. This results in the end-to-end proposition with our core immersion product at the centre. It includes the self-contained and self-sustained AIC24, the service trolley which is a semi-automatic servicing system, IT service tooling and several types of containment tools. Next to this, it also includes work principles related to liquid management, disaster management and knowledge around material compatibility, including a management environment which generates insight.

4. In more detail, can you talk us through the potential advantages of liquid cooling within the data centre, starting with the potential for significant heat recovery/reuse?

Applying immersion, which is also called ”Total Liquid Cooling”, results in an environment where 100% of the IT thermal energy is captured in liquid. Since liquid can contain much more heat and transport this much farther compared to air, it allows you to harvest the heat very easily. We apply an integrated water cooled heat exchanger inside our immersion system and also focus on insulation of the entire system. This means that nearly all heat is captured in the water circuit.

Water is an easy medium to apply and can carry this energy along large distances without significant energy loss. The fact that warm water can be used for cooling, allows datacentres to eliminate chiller systems completely. This is a significant cost saving.

Furthermore, by bringing liquid to the rack or IT itself, prevents the use of raised floors, isle separation schemes and other large scale air handling features inside the datacentres.

Finally, the energy efficiency of the facility is highly improved. Since energy is saved both on the cooling infrastructure and the IT, by removing fans overhead, the power supporting infrastructure like UPS and no-break systems are also reduced.

Other advantages include noise reduction, since we eliminate moving parts from the IT, and increased density and reduced floor space.

5. And there’s also the possibility of allowing for increased temperatures within the data centre?

Since the application of immersion also allows you to use high cooling temperatures, we have already tested several systems based on 55C water input which is hot water cooling, you can effectively draw hot water with 60C directly from our immersion environment. This is of course not for all IT systems and platforms, but still it shows the potential of liquid.

The adoption of liquid in a broader sense allows datacentre to become effective heat producers, especially if they can line-up different liquid technologies to create a high temperature difference. This is a process called temperature chaining and allows for other liquid technologies to contribute to the datacentre thermal production. Our whitepaper about the Datacentre of the Future describes a hybrid environment which is compatible with all types of IT, but applies solely water infrastructures and a mix of liquid technologies with the purpose of creating reusable heat. The result is a near-energy neutral datacentre.

6. And liquid cooling has a smaller footprint than other technologies?

Yes, this is correct. Since liquid has a much higher heat capacity than air, IT can be positioned closer together. Also, a lot of space is traditionally used for airflow. Think about it, to allow air to flow through a rack, you need space in front and at the rear of the rack, solely for airflow. This is greatly reduced with liquid.

7. And the maintenance requirements of liquid cooling are less than for other cooling options?

Yes and no. IT should require less maintenance, but liquid cooled IT requires a little bit more time for maintenance. Therefore, on the IT side, there is not much change. The impact can be found on the facility side. Since the cooling infrastructure is drastically changed and simplified, the maintenance focus is also simplified. There are no issues with dust, air quality or moisture. Water circuits require a lot less space than air infrastructures and the elimination of chiller systems reduces the maintenance required.

Especially with a heat producing datacentre for reuse, most of the infrastructure disappears completely. Obviously you don’t need to maintain what you don’t have.

8. And then there’s the added flexibility that liquid cooling brings with it?

This is actually related to the maintenance and minimal requirements for the facility. This means that it becomes a lot easier to find and utilise locations for datacentre space. Ranging from micro-edge facilities to large core datacentres, the overhead installations are greatly reduced, as is the environmental impact. Think about it, immersion makes no sound, requires no chillers, allows for immediate reuse of heat and needs no air. These are perfect ingredients to quickly deploy any type of datacentre environment and solve someone else’s heat challenge at the same time.

9. You talk of the data centre of the future and refer to data centres as ‘information facilities’ – is this message being well-received or are you still being frustrated by the response of ‘we’ve always done it this way, why change?’?

Well, we’ve only recently started sharing our vision of the datacentre of the future and honestly, everybody knows it already. Information is the sole purpose of the datacentre, and every industry professional will agree. The problem is just that most people in the industry seem to forget this bigger picture in their day-to-day business lives and blindly follow existing ways of work.

We knew this would be the most difficult part of our go-to-market. To convince the industry that there is a different, more effective way of looking at efficiency, platforms and resiliency. This is why we have the strategy which is driven by collaboration, sharing and openness. We want to share as much as we can about the ease of liquid, how you can deal with challenges in a different way and sending out of the box ideas into the world.

10. Indeed, do you think that the majority of data centre owners/operators are ready for the Asperitas message?

I’m not sure, but it looks like it. I certainly hope so, not only for Asperitas’ sake. The industry is causing a lot of energy spillage and the power grids in the world are stretched to their limits. The issues with global warming are real and can only be addressed with smart and integrated approaches to energy challenges. This is where datacentres really can show their potential to address global energy and sustainability challenges. The reality is that the business case for this must be sound before the industry will respond. The liquid approach to datacentre infrastructure is a compelling business case with short ROI timelines of less than 5 years, but a TCO approach to liquid is required to show this effect. So it really comes down to decision makers being open to innovation and change. It will probably take some time for our customers to demonstrate that they can be more competitive with a liquid based facility, before our technologies and ideas are adopted on a large scale.

There’s been much talk of green data centres, but not that much action, do you think that this has to change into the future?

Yes, absolutely, although I don’t necessarily agree with the statement. We have seen an incredible move towards more “efficient” datacentres driven by PUE. PUE however is not an indication of efficiency. As soon as a PUE of 1.3 or lower is achieved, a datacentre could be considered to be “green”. Truth be told, there is too much to say about PUE which undermines the “green” message. Everyone in the industry is familiar with too many ways to manipulate the PUE figures. Putting the internal electric grid in the IT balance, raising environmental temperatures which cause fans to work harder, not applying reuse scenarios because moving the energy negatively impacts PUE and many many more.

On top of this, datacentres are compared with each other which makes no sense whatsoever. A datacentre in Scandinavia with a PUE of 1.1 can easily be much less efficient than one with a PUE of 1.7 in the Mediterranean area.

PUE has done a terrific job for awareness and improving energy efficiency, but it needs to be abandoned as soon as the facility hits 1.3 in colder regions, or 1.7 in warmer areas. Simply because it gets in the way of today’s innovations. Therefore a new metric must be adopted to allow for real and smart energy efficiency beyond the datacentre itself.

11. And is a carbon-neutral data centre, or at least one that produces almost as much waste heat/energy for re-use as it consumes, is a very real possibility?

Yes, absolutely! As long as we’re talking about a carbon neutral OPERATION. We can run the datacentre with a carbon neutral approach to start with. This is fully feasible with technologies available today. Nearly all electrical energy which is consumed by the datacentre can be re-captured as heat which can flow out of the facility in the shape of hot water, ready to be consumed by other industries.

However, you have to understand that most of us have no clue about what was involved in the manufacturing process of all technologies which we can find in a datacentre, let alone the carbon impact of everything. This is something which also needs to be addressed. At Asperitas we take this seriously, which is why we manufacture everything in The Netherlands where we can assure circular and environmentally friendly processes. We also have sufficient insight and focus on our supply chain to ensure sustainability for our complete product development and manufacturing.

12. Over and above what we’ve discussed so far, are there any other technologies and/or trends that you see as making an impact on the data centre of the future?

Yes, the global adoption of district heating, also low grade heat networks. These are great developments where datacentres can build upon. Also the numerous smart city developments are terrific examples which drive the Datacentre of the Future concept. This is all driven by a focus on creating synergy between completely unrelated industries which require heat like spas, hospitals and hotels, agriculture, food industry, urban farms, households or offices, you name it.

13. And the need for agility?

Especially the agility is something which is optimally addressed with Immersed Computing. New environments can be positioned wherever they are required. New facilities, more capacity in existing facilities, the flexibility of water as the main cooling medium, the flexibility with different technologies in the hybrid model, you name it.

14. And, increasingly, the need for sustainability?

Yes. Sustainability is still too much in the background of doing business. I’m happy that the biggest challenge is already overcome by now though. Sustainability used to be a dirty word, associated with tree hugging. Today it is perceived as “sexy”, good for business and a common sense approach to any industry. This is a really big change in global business.

The next challenge is to make the shift from marketing to real focus and actual investments. Therefore we need to really look good at ourselves, our business models and our way of work. Basically a more holistic approach.

This also means that we’re not only talking about energy, but also about circular economy, not buying or implementing what is not really required and being smart about reducing overhead. At Asperitas we’ve taken this to the next level as well. Everything we do is focused on circularity. Each part of our product can be refurbished or recycled. Even the used oil is not consumed, but will be returned to the manufacturer to get a new purpose.

15. There’s been much talk of green data centres, but not that much action, do you think that this has to change into the future?

Yes, absolutely, although I don’t necessarily agree with the statement. We have seen an incredible move towards more “efficient” datacentres driven by PUE. PUE however is not an indication of efficiency. As soon as a PUE of 1.3 or lower is achieved, a datacentre could be considered to be “green”. Truth be told, there is too much to say about PUE which undermines the “green” message. Everyone in the industry is familiar with too many ways to manipulate the PUE figures. Putting the internal electric grid in the IT balance, raising environmental temperatures which cause fans to work harder, not applying reuse scenarios because moving the energy negatively impacts PUE and many many more.

On top of this, datacentres are compared with each other which makes no sense whatsoever. A datacentre in Scandinavia with a PUE of 1.1 can easily be much less efficient than one with a PUE of 1.7 in the Mediterranean area.

PUE has done a terrific job for awareness and improving energy efficiency, but it needs to be abandoned as soon as the facility hits 1.3 in colder regions, or 1.7 in warmer areas. Simply because it gets in the way of today’s innovations. Therefore a new metric must be adopted to allow for real and smart energy efficiency beyond the datacentre itself.

16. And is a carbon-neutral data centre, or at least one that produces almost as much waste heat/energy for re-use as it consumes, is a very real possibility?

Yes, absolutely! As long as we’re talking about a carbon neutral OPERATION. We can run the datacentre with a carbon neutral approach to start with. This is fully feasible with technologies available today. Nearly all electrical energy which is consumed by the datacentre can be re-captured as heat which can flow out of the facility in the shape of hot water, ready to be consumed by other industries.

However, you have to understand that most of us have no clue about what was involved in the manufacturing process of all technologies which we can find in a datacentre, let alone the carbon impact of everything. This is something which also needs to be addressed. At Asperitas we take this seriously, which is why we manufacture everything in The Netherlands where we can assure circular and environmentally friendly processes. We also have sufficient insight and focus on our supply chain to ensure sustainability for our complete product development and manufacturing.

17. If the greening of the data centre is desired and to be achieved, presumably it does need a radical re-think of the technologies being used, in terms of the data centre infrastructure?

This is where the combination of liquid technologies have to play a big role. There is not a single liquid technology which can address all end-to-end datacentre environments. Especially when it is about running carbon-neutral, more liquid technologies should be adopted in a hybrid model. This way we can apply temperature chaining to achieve really high temperatures in a liquid circuit.

18. We seem to be heading towards a hybrid data centre world – a mixture of web-scale/large, centralised facilities and more and more remote/edge, smaller facilities – how does Asperitas’s technology fit in both these scenarios?

Well. The Immersed Computing approach allows robust datacentre environments while at the same time allows you to get into places where traditional datacentre builds can never go. The distributed datacentre model with core and edge facilities are addressed in such a way that sites can be qualified based on energy reuse and drastically minimised “overhead” installations for power and cooling.

19. Over and above what we’ve discussed so far, are there any other technologies and/or trends that you see as making an impact on the data centre of the future?

Yes, the global adoption of district heating, also low grade heat networks. These are great developments where datacentres can build upon. Also the numerous smart city developments are terrific examples which drive the Datacentre of the Future concept. This is all driven by a focus on creating synergy between completely unrelated industries which require heat like spas, hospitals and hotels, agriculture, food industry, urban farms, households or offices, you name it.

20. Moving on to Asperitas the company, you have a range of business partners who work with the company. Can you share some of the work that goes on in this development consortium?

We involved leading companies to aid us and therefore we could progress very fast and at the right level of professionalism. The consortium consists of several different types of partners. Mainly development, technology and experience. All partners in the consortium have contributed to the development with some type of investment. Not just with money, but also with knowledge, man hours, technology, network or exposure.

The work that goes on is mostly related to creating smart solutions for dealing with Immersed Computing. Everything ranging from designing with a sketchbook, to CAD, to prototyping, to testing, to re-engineering and manufacturing is done with our business partners.

Other business partners are closely involved with us in order to develop fundamentally new approaches for the industry. An example of this is the collaborative work which we have done together with our partner Tebodin on our whitepaper about the Datacentre of the Future.

21. And Asperitas also has a wide range of sponsors, divided up into advisory, development and technology partners. Again, could you share with us some of the work that is carried out within these relationships?

The actual product development has been done in close collaboration with our high quality engineering partners like ADSE (Aircraft Development and Systems Engineering), Brink Industrial, a large steel manufacturer in The Netherlands, Perf-IT (management and monitoring), Aqualectra (electrical and control) and Total (Oil).

The technology partners have played a significant role in the development focus of specific parts of our solution, like Schleifenbauer (PDUs), SuperMicro, Bachmann, Starline and Mink.

Finally there is a range of advisory partners who helped us to focus on the viability of the end to end approach. Partners like Leeds University, Netherlands Enterprise Agency and GreenIT. Are great examples of this. Other advisory partners have been able to help us focus on the real usability priorities die to their extensive experience with existing immersion technologies. Partners like Vienna Scientific Cluster and ClusterVision have been very valuable to this focus.

22. In terms of your routes to market, what coverage does Asperitas have to date?

That’s hard to say. We’re focused on cloud providers and we’re in the spotlight when it comes to the infrastructures for cloud at the moment. We’ve gained a lot of traction with the media by sharing a lot of our vision and about the way we do things. Media however is not a measure of the willingness to adopt our technology. Looking at the response from potential customers however, we seem to be getting pretty far. We’re lining up with the biggest and the smallest of businesses and we’re having very good discussions with varying levels of decision makers.

Social media and digital media are large contributors to our market exposure today and I’d say that our coverage looks quite good. We have brought the AIC24 to various events including Datacentre Transformation, Cloud Expo Europe and the ISC and we make a point not to just bring the system, but also run it live to prove the point about simplicity, usability and flexibility. This shows how easy it is to adopt Immersed Computing. We have had great attention at the events so far and you will see us more often in the second half of 2017.

23. And what are your expansion plans?

Opportunistic really. We’ll see where the market takes us and we’ll expand accordingly. We originally planned to start in The Netherlands, but we’re already getting a lot of attention on a larger scale. This means that we’ll be looking at a UK presence, but we’re also considering alternative ways of addressing the market requirements. We’ve already got some ideas on delivery and support through partner channels which allows us to address a larger part of the industry.

24. And do you have any customer success stories and/or trials that you can share with us, please?

We have an early prototype installation running since November 2016 at our development partner VSC and they wish to continue using this system much longer than we ever planned for. They are already running a large immersion environment and they are very fond of our approach to immersion which is a great boost.

Another example started as a demo site we opened at Schuberg Philis a bit more than one month ago in June. It was meant to be a temporary demo site, now they don’t want us to take it away again. That demo system is now used to facilitate compute power for cancer research and we turned them into a customer.

Ever since our launch in March, we’re getting more and more interest. We’re preparing for more implementations and our pipeline is really building up at the moment. Hopefully by this time next year we’ll have a good portfolio of customer successes.

25. Finally, do you think that the truly optimised data centre environment will only be brought about when the facilities and IT folks actually work as one big team, and not in their separate silos?

Yes. It is a common problem with any holistic approach to bring efficiency in any business. Different silos operate within their own boundaries and rarely communicate. Each silo has their own responsibilities and is accountable for each of them. Breaking down walls is the basis of any disruption and those organisations which can deal without these walls are the ones which can make a big difference and can become more competitive than others, simply because they can take efficiency beyond the boundaries of individual disciplines.

26. Any other comments?

Asperitas is a very open organisation and driven by partnerships. We are also driven by sharing as much about liquid and our technology as we can. This means that we’ll be sharing a lot more in the upcoming period and there are many more visions which we will share and pursue. We will bring as much as we can into practice and welcome anyone who wishes to join in this voyage, regardless of whether they are collaborators, suppliers, customers or competitors.

It is often difficult to see the full viability of our technologies and ideas since it is difficult to get a fairly complicated message across. This comes from the fundamentally different way we look at datacentres. For example, we never talk about energy consumption or the cooling of IT. Instead, we talk about thermal production and IT health. This in turn illustrates how we look at IT equipment and the maintenance and facility aspects. This is what brings us to these fundamentally different views on information versus physical footprint.

We have learned that experiencing the technology itself plays a critical role in understanding us, our messages and the fundamentally different foundation upon which a datacentre can be built.

Datacentres are big electrical heaters and we cool them. Why not make them more effective heaters instead?

Pervasive visibility and automation are critical to the rapid detection and mitigation of sophisticated security risks.

By Adrian Rowley, Technical Director EMEA for Gigamon.

It’s a sad fact, but many cybersecurity professionals have been forced to come to terms with the inevitability of security breaches resulting from two key factors. Security teams face ever-greater challenges in combatting data breaches due to the sheer speed of data traversing networks, which leaves insufficient time for decision-making related to potential threats; and the continuous growth in the number of attackers and the ecosystem of resources available to break through standard defences and propagate undetected across most network infrastructures.

The traditional security focus of instrumenting networks for prevention, and concentrating resources on a perimeter that can no longer be defined, is increasingly ineffective in today’s environment. Organisations are also hampered by limited visibility, extraordinary costs, growing infrastructure complexity, and reliance on manual processes to address security incidents.

At 100Gb network speeds, the inter-packet gap of 6.7 nanoseconds surpasses an organisation’s ability to perform intelligent application security, threat detection or inspection. Security Operations teams and technology are therefore being overwhelmed in trying to manage and mitigate an increasing volume and variety of incidents. And this machine-to-human fight favours the attacker, leaving organisations severely disadvantaged.

How best to address this critical situation? There is considerable industry recognition of the need for integrated and automated security architectures that help to mitigate these kinds of risks. According to Gartner1, for example, “Strategies for business continuity and disaster recovery will fundamentally change as enterprise and information are spread everywhere. Continuous visibility and understanding of systems, services, assets and partners is needed as digital business infrastructure will be in a state of constant flux.”

Meanwhile, Dan Cummins, senior analyst at 451 Research, has said that “Siloed security systems and data cannot accelerate or provide a basis for advanced prevention, detection and remediation activities, nor for process-driven security management. To address current threats and unseen risks ahead, organisations need to move towards a unified, collaborative and data-powered security framework that enables shorter cycle times for incident response and resolution while ensuring network performance and business continuity.”

The last few years have seen an exponential increase in the number of different security tools on the market, and there’s also been a lot of talk about machine learning, artificial intelligence (AI) and security orchestration. The problem is that it hasn’t been clear how these play together to improve a company’s security posture. If you deploy these technologies are you more secure, less secure, or in the same situation as before? This has been difficult to assess because there hasn’t been a model against which organisations can measure security success or understand where any gaps remain.

Organisations are also at different phases of the security cycle. Many are in the first stage of doing the basics of providing firewalls, segmentation and multi-factor authentication. Some have moved beyond this and are beginning to build out a baseline, leveraging machine learning techniques, big data and open source and commercial tools. Only a few are in the automation phase as this is relatively new - although we expect to see more organisations starting to deploy aspects of automation and orchestration in 2018.

Another problem is that organisations need a model that addresses practical industry challenges including a massive shortage of skilled personnel, exponential growth in the volume of attacks, and manual, siloed processes. People are therefore asking questions such as how can we automate, how do we deal with the API explosion, and what is the role of machine learning and AI?

Those organisations that have started to build out their infrastructure have tended to do so in a relatively ad hoc manner, which may or may not take them to where they want to be. But one example of the ‘integrated and automated security architectures’ cited by Gartner is Gigamon’s new Defender Lifecycle Model, which is all about providing a structured approach that organisations can use to get to their desired outcome, quickly and efficiently.

Focused on a foundational layer of pervasive visibility and four key pillars - prevention, detection, prediction and containment - the model utilises a security delivery platform to deliver security services that can learn, detect, predict and contain threats throughout the attack lifecycle. This integrates machine learning, AI and security workflow automation to address the increasing speed, volume and polymorphic nature of network cyber threats, automate and accelerate threat identification and mitigation, and shift control and advantage away from the attacker and back to the defender.

The model also provides the intelligence, scale and flexibility to integrate with security tools such as firewalls and intrusion prevention systems to automate and accelerate threat containment and mitigation. With it, security professionals can map out the role of the various technologies involved in the threat ‘kill chain’, gain a better understanding of overall security readiness and gaps, understand how to automate and eliminate human and process bottlenecks to more effectively stay ahead of threats, and ultimately strengthen their organisation’s overall security risk posture and efficiencies.

In moving to an automation model you can begin to address two key challenges: the shortage of skilled personnel, and accelerating your ability to respond in a timely manner to contain and prevent attacks from propagating.

Another big advantage is easy access to data. Organisations can get data from routers, firewalls, endpoints, domain controllers etc. but the challenge is actually getting hold of it. Each of these entities is controlled by a different part of the IT organisation, and coordinating across these siloed departments is a challenge. Many of these approaches also add load on the devices, impacting their performance. So, simply leveraging network traffic becomes a shortcut to getting access to content-rich information.

In addition, machine learning addresses the big data challenge of security, which is gathering context from across an entire infrastructure and building a baseline; while AI applies algorithmic techniques on top of that to surface out anomalies. Automation and orchestration then provide the ability to act on those anomalies.

There are multiple aspects in which Gigamon plays into the machine learning, automation and containment, and initial basic hygiene phases. For example, as machine learning is all about big data and providing ways to assimilate large volumes of data and build a baseline, Gigamon provides easy access to content-rich data that allows companies to build that baseline. In terms of automation, the platform offers an alternative to dealing with the massive API explosion by providing a default API to orchestrate various pieces of solutions. And if you want to deploy a basic good hygiene technique like firewalls, Gigamon makes it easy to do so without having to deal with network maintenance windows or outages.

In this respect, Gigamon is not only an enabler of the machine learning, AI, automation and containment layers - it’s a foundation upon which enterprise network defences can be layered and, more importantly, efficiently leveraged.

1 Gartner, Inc., Use a CARTA Strategic Approach to Embrace Digital Business Opportunities in an Era of Advanced Threats, Neil MacDonald, Felix Gaehtgens, May 22, 2017.

Any business that wants to compete in the digital age has to take advantage of digital channels in every way possible. In fact, beyond leveraging the smaller-scale applications we’re all familiar with, all organisations should be undertaking digital transformation at a fundamental level. At its heart it is optimally connecting people, processes, and content to achieve a competitive advantage.

By John Newton CTO and Founder of Alfresco.

The digital enterprise works differently from the pre-digital enterprise. It’s globally connected. It’s immediately responsive. It’s massively collaborative. It’s mobile, data-driven, and always on. It never stops changing. Yet many are not taking this shift in business thinking on-board and are lagging behind. There are five ways to tell if you're company will flourish or vanish in the digital economy:

Addressing and focusing business activities on new and adjacent markets sets digital-first leaders apart. Avoiding disruption from new entrants and start-ups is key and their priorities lie in optimising the customer experience and constantly introducing new products. Whereas those that lag behind often concentrate on cost-cutting, efficiency and increasing revenue from existing products rather than how to evolve.

Successful digital transformation must start at the top. It is a board level agenda item and the CEO must drive and take responsibility for the programme. This should be seen as the foundation for businesses in the future. If digital transformation duties are delegated down the command tree, as though it is just under the umbrella of technology and not fully prioritised in its own right, then this results in companies falling further behind.

Lagging companies' objectives are all over the place, and if they are motivated, they normally prioritise refreshing technology and cutting costs. In companies that are digital leaders, there is a focused agenda for digital transformation. This is often centred on engaging customers more effectively and building out their ecosystem using new and digital technology.

Looking forward, we seem to be on the cusp of major changes in digital transformation, with disruptors revamping ecosystems to connect internal systems to their customers, partners and suppliers more efficiently. This will have the effect of transforming how they develop their value chains to be more digital and integrated – giving them a real competitive advantage. But if you think digital leaders are ahead today, their intentions over the next three years will become even more aggressive to ensure they stay ahead.

The majority of digital leaders have embraced open standards, open APIs and open source. Openness and open thinking are a core part of digital transformation. This is critical to making the digital connections to customers and partners, enabling them to transform how they do their business in a more agile and flexible way.

In fact, recent research found that roughly half of the CEOs at fast-growing companies are taking the bull by the horns. What are doing right? There are three main things that gives them a competitive edge:

In order to achieve this, there are three levers that organisations can adopt to catalyse a new approach to their business and deploy a successful programme toward digital transformation:

Design thinking -- The optimal flow between the user, what they need and their experience should drive business technology decisions.

Open thinking -- Collaboration is a powerful business accelerator. Innovation from both inside and outside the organisation is encouraged to drive new initiatives.

Platform thinking -- A single, central solution through which you can route information, automate processes, and integrate third-party innovation. This results in the exchange of capabilities and data in a manner that creates added value, repeatable experiences and connects users with information and/or services quickly and meaningfully.

Today's corporate leaders must realise that they need to disrupt or risk being disrupted. Those that are not yet thinking about how they will innovate with new approaches and leveraging technology to its utmost are at risk. Digital transformation is not just a critical stepping stone to success, but key to an organisation's very survival.

The ever-evolving digital landscape has led to the emergence of a multitude of digital technologies. New technology however inevitably brings with it both exciting new opportunities, but also challenges, which need to be approached with a note of caution.

By Manoj Karanth, GM and Head – Big Data Analytics and Cloud, Digital Business, Mindtree.

Cloud technology has been at the heart of this. Companies across sectors have taken swift action to adopt and incorporate some form of cloud computing offering into their digital approach. These new technologies have the capacity to reshape the competitive landscape, but they must be managed in the right way to be effective.

The latest global survey from HBR Analytic Services, released in April of last year found that 85 per cent of organisations surveyed, across a host of sectors, territories and industries, planned to use cloud tools, moderately, to extensively, over the course of the next three years. This figure, if nothing else, is symbolic of the importance that businesses are affording to the ability the cloud has to reshape organisations’ approach to digital.

The cloud has the capacity to increase businesses speed and agility, shape new business models, foster innovation and ideas, and expand collaboration across the workforce. It is no surprise therefore that the survey results revealed a high adoption rate.

Aligning business and IT objectives is crucial to widespread successful implementation of cloud technology across organisations. IT teams cannot act alone, no matter what capacity they have. Business leaders need to take action too.

Together, they need to play a mutually strategic role in constructing new business models to both leverage and incorporate cloud technologies in agile and innovative ways. If not, they risk losing considerable market share or left in their competitors’ wake altogether. This is the transition however that the vast majority of companies struggle with the most.

A recent Cloud User survey from leading market research firm Frost & Sullivan highlights the crux of this problem. According to the results, it goes as far to say that 57 per cent of IT decision-makers consider migration a major obstacle to moving their workloads to the cloud.

Sadly, unlike the 1989 hit film Field of Dreams, depicting a novice farmer with a vision to transform his cornfield into a baseball field, and, by doing so, save his farm in the process, the cloud migration does not function in the same vain. Cloud migration is not simply a case of “if you build it, he will come”.

Leveraging cloud technologies to grow a business takes more than vision. It requires having the right people on your team to execute on it, and is simply too complex and risky to plan and implement alone.

Mapping the right route to a successful cloud journey

Strategy needs to be front and centre. Without a comprehensive road map and measurable business goals, migrating successfully will always be too big a mountain to climb.

The right anchor partner can help you customise and develop the right business case, using metrics and incremental ROI objectives as markers along that journey. An anchor partner can analyse and evaluate the best cloud approach (whether public, private or hybrid) and cloud deployment type (IaaS, PaaS or SaaS) to achieve strategic business goals. Under these banners, falls readiness assessments, change management and audits to assess your security and system architecture requirements.

Once these initial strategic foundations have been laid, an anchor partner is then responsible for determining the proper sequence of application and data migration. By carrying out this deep portfolio analysis, this includes examining the current state of an application-to-cloud suitability analysis, migration intentions and developing a suitable timeline, as well as enhancing them.

Doing this work from the ground up builds the basis of a unilateral view of the company’s application infrastructure and then identifies, and prioritises the relevant enterprise systems, applications and data that critically need to be moved to the cloud.

Keep your eyes on the ball

Whether it’s moving data sets or entire workloads, transitioning to the cloud ties up IT resources, which are needed elsewhere. An anchor partner is the glue that holds this all together. They are with you every step of the way, allowing the IT team to stay focussed on what it does best – building and supporting great products and services.

Businesses may begin in the cloud, but every journey is different for each organisation. No digital transformation is the same. Choosing the right anchor partner provides businesses with faster application implementation and deployment, reduced infrastructure overheads and greater flexibility to scale resources off the cuff.

Whether businesses do this by freeing up budgets for digital transformation or tapping into cutting-edge ways to store, analyse, and segment big data, improving market efficacy through personalisation will always be priority number one.

Businesses ultimately want to maximise the opportunities that the cloud has to offer. This is regardless of their current presence in the cloud.

Not that long ago, the Internet of Things (IoT) was a somewhat vague concept that seemed to belong to a distant future or sci-fi films. However, as technology continues to advance at a rapid pace and transform business models, IoT is quickly becoming a reality, revolutionising the way we live and work.

By Craig Smith, EMEA Director for IoT & Analytics Solutions and Services, Tech Data.

Businesses across the world are adapting to the new technologies associated with this and seeing the benefits that it can bring. From increased business efficiency to enhanced customer engagement, the potential rewards mean that now is the time to get involved, if you’re not already.

IoT is transforming the way businesses operate across many different industries. In retail, for example, digital signage, beacons and “smart shelves” are revolutionising the way that businesses communicate not only with their customers, but with different players in their supply chains, from shipping, to warehousing and distributing. Local governments are also seeing the benefit of IoT with applications like smart lighting and traffic systems allowing for more efficient allocation of budgets and resources. Sensors in our roads, for instance, can “talk” to vehicles and traffic light systems to optimise the flow of traffic in cities. The drive for city planners to adopt this type of technology is part of the move towards making smart cities a reality. Sadiq Khan, the Mayor of London, has recently shared his vision for London to become the world’s leading smart city. This will require the adoption of a range of smart technologies, moving towards more ambitious projects that make full use of the mass of data that city planners have at their disposal through smart technology.